We at Twingly have been delivering Nordic blog data since 2006 and have for a long time considered ourselves as the market leader in Scandinavia.

However, in our quest to find blogs in the different corners of the world we started to feel a sensation that we were slipping some when it came to our Nordic blog coverage. We have a lot of automatic methods to find new blogs of course, but each market needs attention and caring to flourish.

To increase our Nordic coverage, we selected the languages that we support: Swedish, Norwegian, Danish, Finnish and Icelandic. There are also other languages in this region like for instance Sami and Kven, but we rarely get requests for these 🙂

We gave the Nordic languages our best shot, scanning our current blogs for links to other blogs, made sure that all of them are in our automatic system for all new published blog posts etc. Since we also have pretty strict rules of what kind of blogs we welcome into our universe to prevent spam, we also ensured that we approved all new blogs correctly.

Whenever we make specific efforts like this we always try to automate processes that we see give a good result. In this case it has lead to enhanced services for crawling and once they are in production we list them in Source discovery and ingestion.

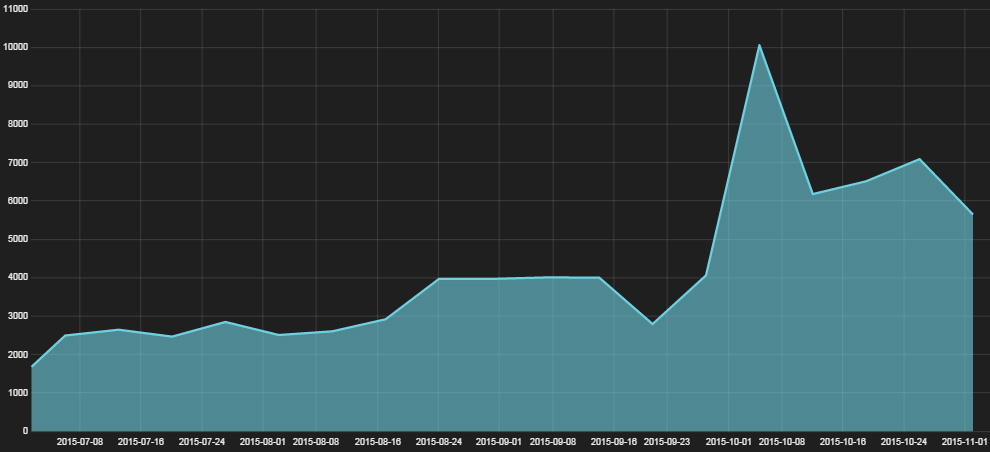

As always when it comes to coverage you have to wait some time to see the effect. Now, one month later, we can see that we increased greatly in average daily blog posts in Finnish (30-40%) as well as Icelandic (about 75%).

However, we didn’t see the same increase in the other Nordic languages. In one way it is good because it means that we have good coverage in Swedish, Norwegian and Danish but it is always disappointing when you make an effort and the result just confirm that you should have focused elsewhere.

Once we have developed even more blog discovery techniques we will apply them to these languages too, to see if we can find those small sparkling blogs hiding somewhere behind dwarf birches, next to fjord beds or daydreaming under a windmill.

By Pontus Edenberg